The new Key Stage 2 tests measure quite different aspects of pupil attainment to the 2015 ones. Schools will differ in how well they have prepared for these new tests and here we explore the extent to which there are local authority differences in success rates across the new and old tests.

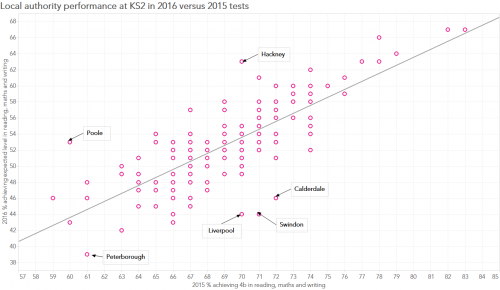

The chart below shows each local authority’s overall performance, as measured by the proportion of pupils achieving the ‘expected’ score, in 2016 and 2015. The bar has been raised in 2016 so that the proportion passing is about 16 percentage points lower overall. Areas doing relatively well overall in these new tests include: Wandsworth, Bristol, Havering, Slough, Croydon, Kent, Gateshead, Barking and Dagenham, Hackney and Poole. Areas doing relatively poorly compared to 2015 include: Swindon, Liverpool, Calderdale, Dorset, West Sussex, Stoke-on-Trent, Oldham, Peterborough, St Helens and Wirral.

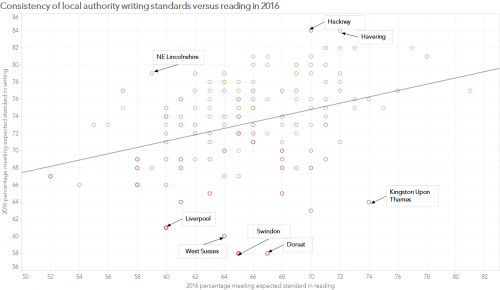

However, one element of this overall metric – writing – was moderated across schools by the local authority and so there is clearly some concern that standards were not consistent across the country. If we compare the 2016 writing and reading figures in local authorities we can see some very clear disparities. Local authorities such as Hackney, Havering and NE Lincolnshire have very high writing scores, given their reading performance. Local authorities such as Liverpool, West Sussex, Swindon, Dorset and Kingston Upon Thames have very low writing scores, given their reading performance. Of course, it is perfectly possible that children across West Sussex have particular difficulties in writing compared to reading. But it seems more likely that moderation within this local authority was particularly harsh.

Our suspicion is that consistency in moderation across local authorities is much worse in 2016 than it was in 2015. It is notable that the correlation between local authority reading and writing scores is 0.35 in 2016 compared to 0.84 in 2015 (admittedly for level 4 in each). This may be because schools and local authorities are not yet familiar with the new expected standard. Michael Tidd has written a series of post (see here for an example) arguing that the guidelines were not precisely specified making it impossible for consistency to be achieved.

Given these concerns about the writing moderation this year, perhaps it would be safer to judge overall performance on maths and reading only. On this metric local authorities such as Kingston, who appear to have judged writing more harshly than others, should be very pleased with their results.

Edit: if we compare the 2016 writing standard against every other published local authority metric (e.g. 2015 writing, 2016 reading, maths, SPAG, etc…), this is our judgement of where writing moderation has been far too harsh (in no particular order):

- Swindon

- Dorset

- West Sussex

- West Berkshire

- Richmond

- Merton

- Kingston

- Brent

- Barnet

- Shropshire

- Calderdale

- Liverpool

- Cheshire East

By contrast, we feel that writing moderation may have been too generous in these areas:

- Gateshead

- Northumberland

- South Tyneside

- Salford

- Warrington

- Bradford

- Doncaster

- Hull

- NE Lincolnshire

- N Lincolnshire

- Leicester

- Norfolk

- Central Bedfordshire

- Hackney

- Newham

- Tower Hamlets

- Havering

I wonder what will be done with this data? I suspect nothing. It would seem logical for moderators to work collaboratively and across different authorities. If writing examples and judgements were shared online, this would enable greater transparency as well as supporting judgements in other year groups.

Greater collaboration across local authorities in moderation is needed. We have written about comparative judgement tools for doing this before (e.g. http://ffteducationdatalab.org.uk/2015/11/josh-and-the-dragons-measuring-creative-writing/). But I think the blog posts of Michael Tidd make it clear that the STA needs to issue more specific guidance to allow consistent moderation to take place.

This is interesting given that the tick list approach was introduced partly to assuage criticism that a best fit approach was subjective or open to interpretation. It was the first year of course, exemplification was late, and the time consuming approach contained in the exemplification was impractical. Agreement trialling was not held either. The reading test is not a useful yardstick I suspect, with huge variation in year on year performance in some schools and LAs and variation between authorities as noted above.

As a school that was moderated harshly by Cheshire East – I would agree that writing moderation across the country needs to change. We need to have consistency- for instance moderators should all be of a high calibre and know Y6 writing. Cheshire East moderators were a mixture of infant teachers and consultants who hadn’t taught for years. Trafford were all Y6 teachers- those who teach the year group, and have knowledge of the curriculum and standards. It should be a test. Two pieces of writing on different genres. That way we would be comparing like for like- in class and across schools and nationally. Or if not a test it should be looking at children’s books and a best fit judgement. Rather than the very subjective mess which we are going to recreate again this year.

Thank you for this research – it is another piece of evidence to show that we have made progress in writing.

An excellent piece of work – thank you.

How would we go about defining the position of our own LA please?

Thanks Paul. You can look up the values for your LA in Tables L2 and L3 in this https://www.gov.uk/government/uploads/system/uploads/attachment_data/file/549178/SFR39_2016_Tables.xlsx. There’s also a GPS (SPaG) vs writing chart you can see here https://public.tableau.com/profile/rebecca.allen#!/vizhome/shared/FCKJG85TB

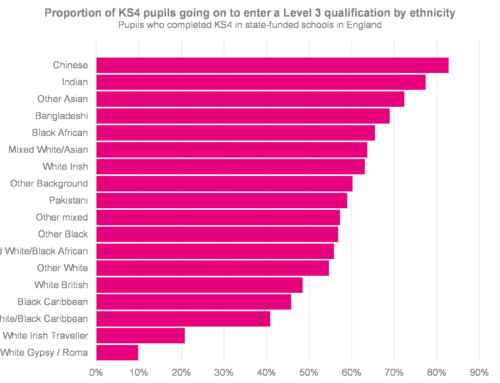

It’s interesting that the majority of LAs where you have suggested the writing moderation was too generous are fairly deprived. We have found that pupils from more deprived backgrounds are more likely to underperform in the test, where they find the conditions more stressful, whereas teacher assessment is carried out in a more familiar setting and so they are better able to demonstrate what they know and can do.

You may be right, some of those LAs may have over-generous moderation, but it’s also pretty insulting to insinuate that they must be fiddling the system (deliberately or otherwise) and to rule out the possibility that they might just be teaching writing better than other subjects. I agree that it merits further investigation, but until you’ve got any actual evidence that moderation in these LAs is over-generous, it is totally inappropriate to suggest summarily removing that data and taking away the one measure that allows deprived LAs to compete on a more equal footing.

Hi Stephen. Thanks for commenting. I disagree that we are insinuating that any local authority is fiddling the system or acting in bad faith. We say that we *suspect* that moderation in 2016 was inconsistent between local authorities. Also on the issue about tests being biased against disadvantaged pupils, some would argue that it is teacher assessment that is biased against them. See for instance https://thewingtoheaven.wordpress.com/2015/10/11/tests-are-inhuman-and-that-is-what-so-good-about-them/

A concern I have is that some children diagnosed with dyslexia had no chance of meeting expected standards in WRITING due to consistently spelling words incorrectly. However they had already been ‘tested’ for spelling even though their composition and effect, engagement, use of sentence types etc were consistently of the expected standard. Will they ever reach the expected standard, answer NO ! They have a disability and are therefore being discriminated against. I have fed this back to Cheshire East moderators and they did acknowledge that the guidance is very black and white. Anyone else find the same issues?

“it seems more likely that moderation within this local authority was particularly harsh.”

Does it? LAs should certainly look at their results and consider the question, but to assume that moderation is the reason for either higher or lower than the mean performance is somewhat premature. It’s quite possible that the effects of the LAs moderation was actually neutral overall – it’s just that the schools’ submitted results were lower/higher in the first place.

Thank you for your comment. We plan to write about the variation in writing standards achieved across schools within local authorities when we have access to the data in a few weeks’ time.

A very useful article but I don’t agree that this discrepancy must be due to different standards of moderation. Only a minority of schools were moderated and they may have filed results closer to the norm (either higher or lower than the LA’s unmoderated schools). I think that the root of the problem is more likely in a combination of unclear criteria and a moderation process that enables schools to know whether they are being moderated or not before they upload their assessments.

Peter: “a moderation process that enables schools to know whether they are being moderated or not before they upload their assessments.”

This is a really important point. I’ve not been involved with moderation before, but I understand that it changed this year, such that teachers could change their assessments after schools had been selected for moderation. This absolutely cannot be allowed, because it allows unscrupulous teachers/school leaders to game the system. The only fair way for moderation to be carried out is for initial assessments to be submitted, the local authority to then select schools for moderation, and assessments can then *only* be changed at the direction of the moderators. Anything else makes a mockery of due process.

(I’m not saying there are huge numbers of teachers or schools that would do this, and I’m sure it would only be a very small minority, but I’m willing to bet there are *some* that do.)

Excellent point Stephen.

Stephen raises a fair point. The system originally proposed by the DfE was that schools would submit data before moderation, but then they had to change that because they hadn’t left enough time for schools to actually do the assessment. The result was a return to the old system that mean schools knew in May whether or not they would be moderated, but didn’t have to submit data until June. It means that for schools which were moderated – there was the opportunity to spend June “teaching” to fill gaps to get the best outcomes they could, while schools who knew they were “off the hook” could submit pretty much whatever suited them, knowing that no-one was ever likely to check. It is a farce – and I have heard of schools preparing two sets of data in the lead-up to that notification date, a bit like crooked accountants keeping two sets of books.

So yes, the system was definitely open to ‘corruption’, and inevitably, therefore, I’m sure some happened. Alongside the fact that such schools probably had minimal support with the application of the framework in the first place, it’s no wonder that results are all over the place. The really interesting story will be to see (a) how results compare from moderated to unmoderated schools – particularly in comparison to their previous record; and (b) what variation is like more generally at school-level, particularly compared to prior attainment and similar factors.

Exactly, Stephen. I don’t even think that many schools were unscrupulous. The lack of clarity of the criteria and the uncertain status of the marked examples meant that there was plenty of room for interpretation. This was particularly shameful as one of the main purposes of the abolition of levels was to make assessment more precise.

Michael Tidd’s comment of September 8th is REALLY important. If there is a clear difference between moderated and unmoderated results then this should be highlighted for all to see.

Equally worrying is whether we are moderating ‘unaided’ work or work that has been marked, edited and re-written – before being written up in best, albeit unaided at that stage, before being judged nd moderated.

The former – truly unaided work – is what we should be judging the children on what they can achieve because this is what has been learned and applied by them without direct support. The latter may be an excellent way to judge the quality of teacher-marking and advice and support, but is not an effective way of judging what children have learned and can apply for themselves.

Kieran, as a moderator, we were advised that work was judged as independent if it was clearly edited and improved by the child, with very minimal teacher input. I agree with this, as no competent adult would ever submit a piece of writing themselves without first redrafting it in some way. Where teacher marking was excessive, then that piece could not be considered independent and did not form part of the judgement. This had big implications for some schools running up to moderation, as the Year 6 teachers had to move away from their marking policy which was giving their children too much scaffolding and advice. Obviously there is the suspicion that some people may try to hide previous drafts that have been heavily marked, but it is up to the skill of the moderator to spot this, which is certainly possible. I, for one, was presented with a range of work by all schools I moderated that showed the full writing process of the children. If you asked me to sit down and write an extended piece in a test, it would not show my actual ability as a writer. Editing and redrafting, done as independently as possible, is an essential part of the process, not an add on at the end.

My concern is the size of the sample on which the evidence of being judged to be too harsh or too generous is based. STA require a 25% sample of schools within an LA to be moderated but within that only 15% of KS2 pupils and 10% of KS1 pupils evidence is scrutinised. In my LA, that equated to only 103 Year 6 pupils’ work being looked at.