Value added data, calculated by a number of different methods over the years, has been a feature of school performance tables since 2003.

In principle, the idea is sound.

Comparisons of schools’ raw attainment measures often say more about schools’ intakes than they do about the quality of teaching and learning.

So if the state is to intervene in poorly-performing schools then surely it should identify them after having removed the impact of prior attainment?

Often, however, value added (VA) measures are interpreted as measures of school effectiveness. They aren’t. They are just descriptive statistics about attainment that take account of pupils’ prior attainment.

They would only be measures of school effectiveness if pupil attainment was solely determined by prior attainment and school effectiveness.

To isolate the part of pupils’ results attributable to teaching and learning we would have to remove the impact of all other factors that contribute to pupil attainment, including everything that happens at home, and measurement error in both measures of current attainment and prior attainment.

Choice of prior attainment measure

Key Stage 2 VA measures are also affected by the choice of prior attainment measure. In performance tables it has always been calculated on the basis of attainment at Key Stage 1. But as we have shown previously, this disadvantages junior schools.

At a push, the Foundation Stage Profile could be used as a prior attainment measure as we showed here. Attempts to introduce a Reception baseline measure for this failed due to a lack of comparability of the results produced by three different providers. Plans may well be resurrected in future although they are likely to face plenty of opposition.

Is there another way?

Let’s assume that the state will continue to try to intervene in poorly performing schools. Is there another way of identifying them using the data currently available?

We know that disadvantage is associated with attainment, so would it be possible to identify schools whose performance appears to be poor compared to schools with similar intakes?

To answer this, we first need to think what other pupil data is available besides data on attainment. There isn’t much, though: ethnicity, first language, gender, age, a history of schools attended and a history of free school meal eligibility.

We do know more about the areas in which pupils live. Firstly, there are income deprivation affecting children index (IDACI) scores which are available for small neighbourhood areas (lower super output areas).

There are also a number of 2011 UK census indicators available for output areas that are associated with pupil attainment, including:

- % of households that are rented

- % of total population aged 16+ educated to national qualifications framework level 4 (broadly speaking, higher education) or above

- % of total population aged 16+ that are unemployed

- % of total population aged 16+ in full-time employment

- % of total population aged 16+ in socio-economic groups 1 and 2 (managers, directors and professional occupations)

We calculate a socio-economic index using the indicators above using principal components analysis. (This assumes that all the indicators are correlated with an underlying scale running from most advantaged to most disadvantaged.)

Finally, we grab some school-level measures, including the percentage of pupils eligible for free school meals in the past six years, the percentage of pupils whose first language is not English and the percentage of pupils resident in the 30% most and least deprived areas according to IDACI.

Are these factors associated with KS2 performance?

Using our set of pupil, small area and school characteristics, we model pupils’ Key Stage 2 maths scores using linear regression. Taken together, the characteristics are very weak predictors. Just 11% of the variation in pupils’ scores can be accounted for. By contrast, the DfE KS1-KS2 value added model accounts for around 60% of the variation.

It is likely we would do far better if we had more data on pupils’ own circumstances, rather than the areas in which they live. These might include measures such as household income, parental occupation and parental level of education.

But we can do slightly better if we aggregate our data to school-level and model schools’ scores. Then, 26% of the variation in schools’ average KS2 maths outcomes can be explained by the characteristics data available.

We pursue this approach further in the following section. So that we can compare this approach more closely with the current DfE floor standard definition, we model the headline KS2 measure, the percentage of pupils achieving the expected standard in each of reading, writing and maths – what we’ll refer to from now on as the context-adjusted method.

(Calculations here are quite rough and ready – the results are intended to be indicative rather than definitive.)

Comparing three different approaches to defining a floor standard

Based on provisional data, 720 schools fell below the 2016 floor standard, of 65% of pupils meeting the expected standard in reading, writing and mathematics, or the school achieving value added scores below a certain threshold in any one of these three subjects. This is a slightly higher number than the 665 confirmed to be below the floor in today’s Statistical First Release.

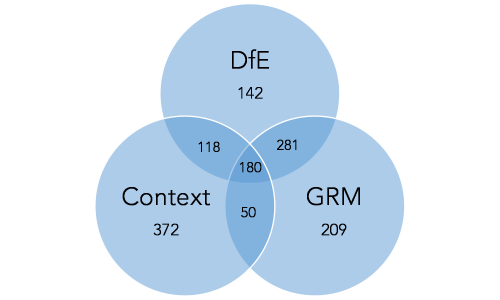

We compare this set of schools to the 720 lowest scoring schools on the context-adjusted method described above, and the 720 lowest scoring on the ‘overall’ KS1-KS2 value-added measure for grammar, punctuation and spelling; reading; and maths (GRM) that we calculated in this blogpost.

There is some overlap between these three approaches to defining a floor standard, but also some difference.

The current DfE floor standards may pick up a number of schools which achieved decent overall measures of attainment given the context of their pupil intakes, but achieved low VA scores in just one subject.

Similarly the context-adjusted method may identify schools which made good progress from Key Stage 1 to Key Stage 2 but where overall Key Stage 2 attainment was somewhat lower than at other schools serving similar intakes.

Over 90% of the 13,820 schools for which we have complete data and which have at least ten pupils in their 2016 KS2 cohort were above all three floor standards, while 180 would fall below all three.

Number of schools below each floor standard

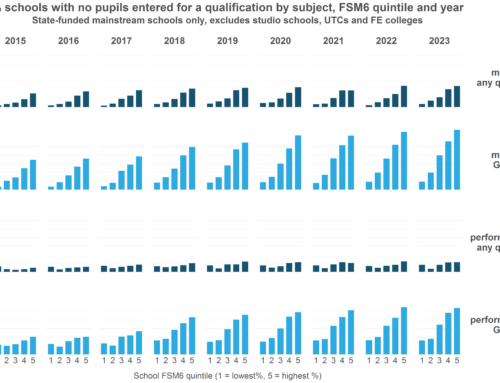

Under the current DfE floor standard, junior schools are slightly more likely (7%) to be below the floor than all-through primary schools (5%).

A floor standard based exclusively on KS1-KS2 value added would see almost 10% of junior schools fall below the floor. By contrast this rate falls to 2% based on the contextualised attainment measure, and just below 4% based on FSP-KS2 value added.

Some of the differences in the sets of schools identified by the different ways of defining the floor standard arise from using different Key Stage 2 indicators as the outcome measure. If we were to calculate a context-adjusted GRM score, the school-level correlation with the FSP-KS2 GRM measure would be 0.76 and the school-level correlation with the KS1-KS2 GRM measure would be 0.75.

If we were to define floor standards for the context-adjusted GRM score and for the FSP-KS2 GRM score (once again choosing the lowest performing 720 schools for each indicator) then 330 schools would be common to both sets.

There’s no other way

Value-added measures do not provide an index of school effectiveness that ranks schools from best to worst. Nonetheless, they are still likely to be the best way of identifying a set of poorly-performing schools that may benefit from state intervention given the data currently available.

Perhaps the identification of such schools could be improved by:

- Using a number of analytical approaches, including value added and possibly contextualised value added. If a number of methods lead to the same conclusion about a school’s performance then there is a firmer basis for action.

- Using one of the following measures of prior attainment in value added measures, in decreasing order of desirability:

- A Reception baseline measure

- The Foundation Stage Profile

- Key Stage 1 test scores

- Calculating value added over a number of years given the small sizes of many primary school cohorts

Want to stay up-to-date with the latest research from Education Datalab? Follow Education Datalab on Twitter to get all of our research as it comes out.

Leave A Comment